AI, explain yourself !

“It’s time for AI to move out its adolescent, game-playing phase and take seriously the notions of quality and reliability.”

There is an interesting commentary with interviews by Don MONROE in the recent Communications of the ACM, November 2018, Volume 61, Number 11, Pages 11-13, doi:

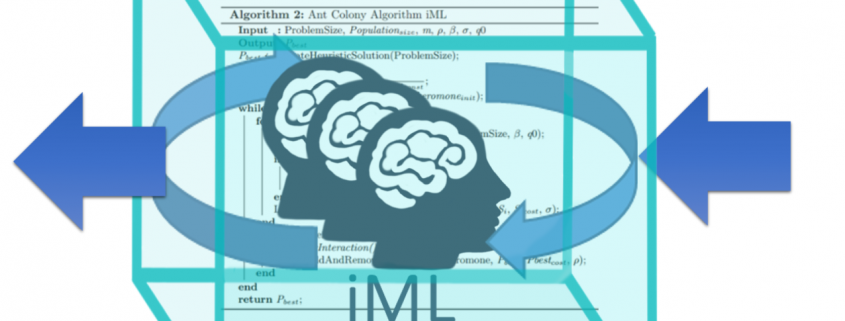

Artificial Intelligence (AI) systems are taking over a vast array of tasks that previously depended on human expertise and judgment (only). Often, however, the “reasoning” behind their actions is unclear, and can produce surprising errors or reinforce biased processes. One way to address this issue is to make AI “explainable” to humans—for example, designers who can improve it or let users better know when to trust it. Although the best styles of explanation for different purposes are still being studied, they will profoundly shape how future AI is used.

Some explainable AI, or XAI, has long been familiar, as part of online recommender systems: book purchasers or movie viewers see suggestions for additional selections described as having certain similar attributes, or being chosen by similar users. The stakes are low, however, and occasional misfires are easily ignored, with or without these explanations.

“Considering the internal complexity of modern AI, it may seem unreasonable to hope for a human-scale explanation of its decision-making rationale”.

Read the full article here:

https://cacm.acm.org/magazines/2018/11/232193-ai-explain-yourself/fulltext