Mini Course on Medical Decision Support:

From Data Science to Explainable AI

“It is remarkable that a science which began with the consideration of games of

chance should have become the most important object of human knowledge”

Pierre Simon de Laplace, 1812.

Winter Term 2019 (next lecture: September, 2019 Vienna, 3 ECTS at the WU Executive Academy)

Short Description: This Mini-Course is an introduction into a core area of health informatics and helps to understand decision making generally and how human intelligence can be augmented by Artificial Intelligence (AI) and Machine Learning (ML) specifically (-> What is the difference between AI/ML?). Andreas Holzinger has taught this course in various versions, variations and duration since 2005.

This page is valid as of August, 21, 2019, 06:00 MST

Welcome Students to the class of 2019

1) Introduction Paper:

HOLZINGER (2016) Machine Learning for Health Informatics.

2a) German Speaking students can additionally read this :

https://link.springer.com/article/10.1007/s00287-018-1102-5

2b) Explainable-AI for the medical domain:

https://arxiv.org/abs/1712.09923

3) Introduction Video:

https://www.youtube.com/watch?v=lc2hvuh0FwQ

4) Attend class and enjoy the coffee breaks

5) Take the exam successfully!

Module-Quizzes availabe via Socrative:

https://b.socrative.com/login/student/

(Room Name will be given during the lecture)

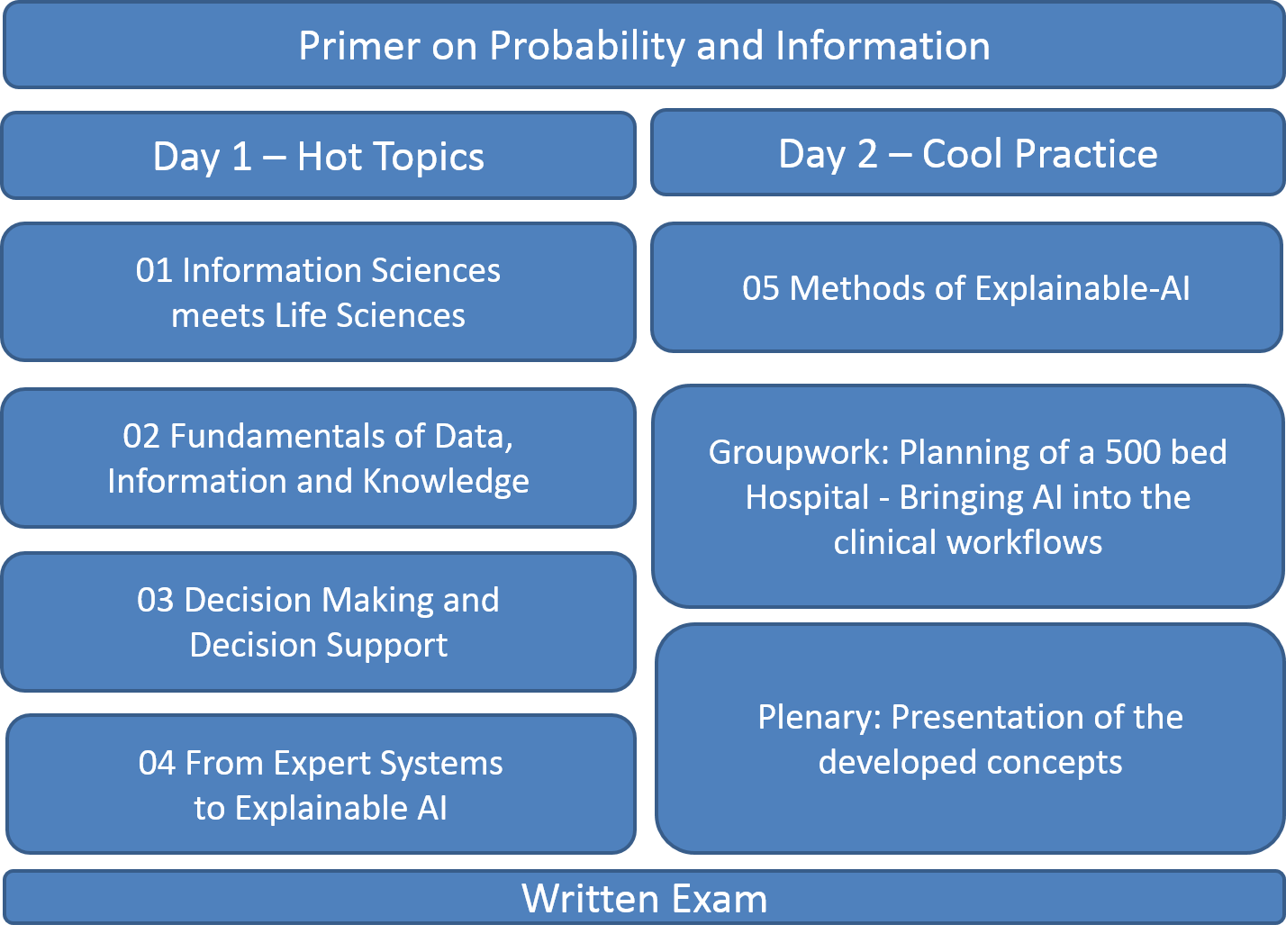

Module 00 – Primer on Probability and Information (optional)

When confronted with decision making we inherently must deal with our world of uncertainty. The strenght of probabilistic machine learning results from the mathematically thus computationally mechanisms of the combination of prior knowlege with incoming new data.

Topic 00: Mathematical Notations

Topic 01: Probability Distribution and Probability Density

Topic 02: Expectation and Expected Utility Theory

Topic 03: Joint Probability and Conditional Probability

Topic 04: Independent and Identically Distributed Data (IIDD)

Topic 05: Bayes and Laplace

Topic 06: Measuring Information: Kullback-Leibler Divergence and Entropy

Lecture slides full size (3,984 kB): 0-PRIMER-Probability-and-Information-2019-09-18-HOLZINGER-print

Reading for students:

David J.C. Mackay 2003. Information theory, inference and learning algorithms, Boston (MA), Cambridge University Press.

Online available: https://www.inference.org.uk/itprnn/book.html

Slides online available: https://www.inference.org.uk/itprnn/Slides.shtml

An excellent resource is: David Poole, Alan Mackworth & Randy Goebel 1998. Computational intelligence: a logical approach, New York, Oxford University Press, where there is a new edition available along with excellent student resources available online:

https://artint.info/2e/html/ArtInt2e.html

Module 01 – Introduction: Information Sciences meets Life Sciences

Topic 01: The HC-AI approach: Towards integrative AI/ML

Topic 02: The complexity of the application area Health Informatics

Topic 03: Probabilistic Information

Topic 04: Automatic Machine Learning (aML)

Topic 05: Interactive Machine Learning (iML)

Topic 06: Causality, Explainability, Interpretability

Lecture slides 3×3 (pdf, 4,395 kB): 1-INTRO-MiniCourse-2019-09-18-HOLZINGER-print-3×3

Lecture slides full size (pdf, 6,260 kB): 1-INTRO-MiniCourse-2019-09-18-HOLZINGER-print

Module 02 – Data, Information and Knowledge Representation

Topic 00 Reflection – follow-up from last lecture

Topic 01 What is data? The underlying physics of data

Topic 02 On Standardization

Topic 03 Knowledge Representation

Topic 04 Biomedical Ontologies

Topic 05 Medical Classifications

Lecture Slides 2×2 (pdf 6,824 kB) 2-DATA-MAKE-Decision-20180919-final-2×2

Lecuture Slides full size (pdf, 3,825 kB) 2-DATA-MAKE-Decision-20180919-final

Exam-Quiz via Socrative

Module 03 – Decision Making and Decision Support

Topic 00 Reflection – follow-up from last lecture

Topic 01 Medical Action = Decision Making

Topic 02 Cognition

Topic 03 Human vs. Computer

Topic 04 Human Information Processing

Topic 05 Probabilistic Decision Theory

Lecture Slides 2×2 (pdf, 4,580 kB) 3-DECISION-MAKE-Decision-20180919-final-2×2

Lecture Slides full size (pdf, 7,546 kB) 3-DECISION-MAKE-Decision-20180919-final

Exam-Quiz via Socrative

Module 04 – From Expert Systems to Explainable AI

Topic 00 Reflection – follow-up from last lecture

Topic 01: Decision Support Systems (DSS)

Topic 02: Computers help making better decisions?

Topic 03: History of DSS = History of Artificial Intelligence

Topic 04: Example: Towards Personalized Medicine

Topic 05: Example: Case Based Reasoning (CBR)

Topic 06: Towards explainable AI (ex-AI)

Lecture slides 2×2 (pdf, 6,167 kB): 4-EXPLANATION-MAKE-Decision-20180919-final-2×2

Lecture slides full size (pdf, 4,278 kB): 4-EXPLANATION-MAKE-Decision-20180919

Exam-Quiz via Socrative

Module 05 – Methods of Explainable AI and Causal Reasoning

Topic 00 Reflection – follow-up from last lecture

Topic 01: Towards explainable AI

Topic 02: Causal Reasoning

Topic 03: Trade-off: Explainability versus Accuracy

Topic 04: (Some) Current State-of-the-Art Methods

Topic 05: Stochastic And-or-graphs (AOG)

Lecture slides 2×2 (pdf, 4,235 kB): 5-METHODS-EXPLAINABLE-AI-MAKE-Decision-20180920-2×2

Lecture slides full size (pdf, 6,548 kB): 5-METHODS-EXPLAINABLE-AI-MAKE-Decision-20180920

Exam-Quiz via Socrative

Practical Work: Planning a 500-bed hospital IT-infrastrucure –

how to bring AI/ML directly into the clincial workflow

In a roleplay groupwork the task is to optimize clinical/medical workflows with AI/Machine Learning tools,

for decision support and quality enhancement. A strong issue is to consider social, ethical and legal aspects

and to enable accoutable, interpretable, replicable and ethical responsible AI enhancements, fostering security, safety and trust.

Exam-Questions: (personally handed out)

About the Lecturer:

Andreas Holzinger promotes a synergistic approach to Human-Centred AI (HCAI) and has pioneered in interactive machine learning (iML) with the human-in-the-loop. He promotes an integrated machine learning approach with the goal to augment human intelligence with artificial intelligence to help to solve problems in health informatics.

Due to raising ethical, social and legal issues governed by the European Union, future AI supported systems must be made transparent, re-traceable, thus human interpretable. Andreas’ aim is to explain why a machine decision has been reached, paving the way towards explainable AI and Causability, ultimately fostering ethical responsible machine learning, trust and acceptance for AI.

Andreas obtained a Ph.D. in Cognitive Science from Graz University in 1998 and his Habilitation (second Ph.D.) in Computer Science from Graz University of Technology in 2003. Andreas was Visiting Professor for Machine Learning & Knowledge Extraction in Verona, RWTH Aachen, University College London and Middlesex University London. Since 2016 Andreas is Visiting Professor for Machine Learning in Health Informatics at the Faculty of Informatics at Vienna University of Technology. Currently, Andreas is Visiting Professor for explainable AI, Alberta Machine Intelligence Institute, University of Alberta, Canada.

Andreas Holzinger is lead of the Holzinger Group (HCAI-Lab), Institute for Medical Informatics/Statistics at the Medical University Graz, and Associate Professor of Applied Computer Science at the Faculty of Computer Science and Biomedical Engineering at Graz University of Technology. He serves as consultant for the Canadian, US, UK, Swiss, French, Italian and Dutch governments, for the German Excellence Initiative, and as national expert in the European Commission. His is in the advisory board of the Artificial Intelligence Strategy AI made in Germany of the German Federal Government and in the advisory board of the Artificial Intelligence Mission Austria 2030.

Personal Homepage: https://www.aholzinger.at

Video for Students: https://youtu.be/lc2hvuh0FwQ

Group Homepage: https://human-centered.ai

Google Scholar: https://scholar.google.com/citations?hl=en&user=BTBd5V4AAAAJ&view_op=list_works&sortby=pubdate

Additional study material:

Course Biomedical Informatics – Discovering Knowledge in (big) data (1 semester – 12 lectures – 3 ECTS):

https://human-centered.ai/biomedical-informatics-big-data/