What is the difference between Artificial Intelligence (AI), Machine Learning (ML) and Deep Learning (DL)?

My students repeatedly ask the question: “What is the difference between Artificial Intelligence (AI) and Machine Learning (ML) – and is deep learning (DL) belonging to either AI or ML?”. In the following I provide a I) brief answer, a II) formal short answer and III) a more elaborated answer:

I) Brief answer: It is the same and it is different. Deep Learning can also belong to both, and: both are necessary. This explains well why HCI-KDD – or better Human-Centred AI (HCAI) is so enormously important: Human–Computer Interaction (HCI) deals mainly with aspects of human perception, human cognition, human intelligence, sense-making and the interaction between human and machine. Knowledge Discovery from Data (KDD), deals mainly with artificial intelligence, the computational intelligence and with the development of algorithms for automatic and interactive data mining [1].

II) A formal short answer:

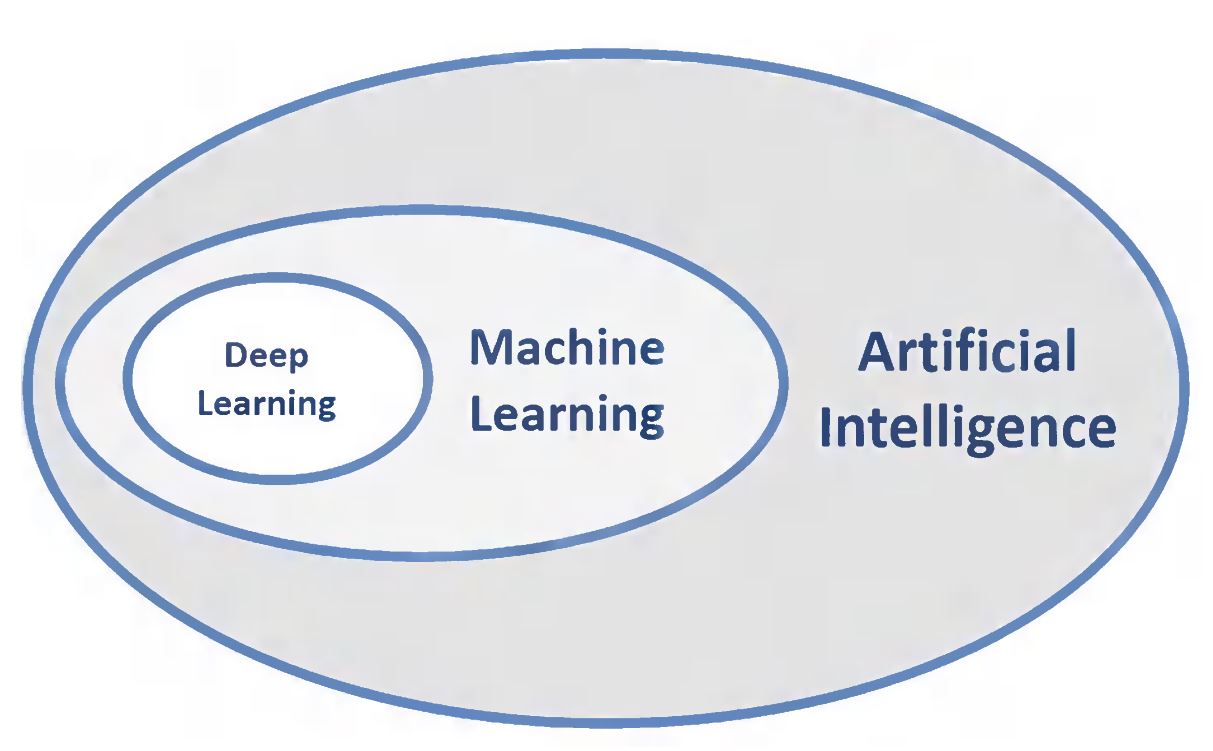

Deep Learning is part of Machine Learning is part of Artificial Intelligence

DL \subset ML \subset AI

Figure 1 from: Andreas Holzinger, Peter Kieseberg, Edgar Weippl & A Min Tjoa 2018. Current Advances, Trends and Challenges of Machine Learning and Knowledge Extraction: From Machine Learning to Explainable AI. Springer Lecture Notes in Computer Science LNCS 11015. Cham: Springer, pp. 1-8, doi:10.1007/978-3-319-99740-7_1

[Preprint available at PURE TU Graz]

This follows the popular Deep Learning book by Ian Goodfellow, Yoshua Bengio and Aaron Courville published by MIT Press 2016 [2], and here is the explanation why:

This leads us to:

III) A more elaborated answer:

Artificial Intelligence (AI) is the field working on understanding intelligence. The motto of Google Deep Mind is “understand intelligence – then understand everything else” (Demis HASSABIS). Consequently the study of human intelligence is of utmost importance for understanding machine intelligence. The long-term goal of AI is in general intelligence (“strong AI”). AI had always a close connection to cognitive science and is indeed a very old scientific field – possibly computer science started with it (Alan Turing). After a first hype between 1950 and 1980 and a following AI-winter, it has regained hype status because of the practical success made by machine learning and particularly by the success of deep learning very recently (although going back to the early days of AI, e.g. [3]. Recently the DARPA described it well (DARPA Perspective on Artificial Intelligence by John LAUNCHBURY – excellent video, I highly recommend my students to watch it:

According to DARPA and their perspective on AI, there are three waves of AI – DESCRIBE – CATEGORIZE – EXPLAIN :

I) DESCRIBE The first wave was a kind of a programmed ability to process information, i.e. engineers handcrafted a set of rules to represent knowledge in (narrowly) well-defined domains. The structure of this knowledge is defined by human experts and specifics in the domain are explored by computers. Interestingly, these first approaches were explainable (see explainable AI).

II) CATEGORIZE The second wave of AI is the success of statistical/probabilistic learning, i.e. engineers create statistical models for specific problem domains and train them, preferably on very big data sets. (BTW: John LAUNCHBURY emphasizes the importance of geometric models for machine learning, e.g. manifolds in topological data analysis – exactly what we foster, see CD-MAKE Topology) and this beautiful recent article by Massimo FERRI. Currently neural networks (deep learning) show tremendously interesting successes (see e.g. a recent work from our own group [4].

III) EXPLAIN The future third wave will have to focus on explainable ai, i.e. contextual adaptation (understanding the context – which to date needs a human-in-the-loop!), and make models able to explain how an algorithms came to a decision (see my post on transparceny and trust in machine learning and our recent paper [7], and see our iML project page). In essence ALL three waves are necessary in the future and the combination of various methods promise success!

It shall be emphasized that Machine Learning (ML) ist the workhorse of AI, to gain knowledge from experience and improve learning behaviour over time [5]. AI is a much broader term, and includes also philosophical, social, ethical aspects and provides the broader fundament for ML. It encompasses the underlying scientific theories of human learning vs. machine learning, ML itself is a very practical field with uncountable practical applications – the introduction by Sebastian Thrun (Stanford) and Katie Malone (Moderator at Linear Digressions) brings this beautiful to the point and makes it important how important machine learning is for business:

Deep Learning (DL) is one methodological family of ML based on, e.g. artificial neural networks (ANN), deep belief networks, recurrent neural networks, or to give a precise example of a feed forward ANN: the multilayer perceptron (MLP), which is a very simple mathematical function mapping a set of input data to output data. The concept behind is representations learning by introducing other representations that are expressed in terms of simpler representations. Maybe, this is how our brain works [6], but we do not know yet.

A nice example is the recognition of a cat (at 2m11s):

However, this immediately let us understand the huge shortcomings of these approaches: While these algorithms nicely recognize a cat, they cannot explain why it is a cat. The algorithm is unable to explain why it come to this conclusion. Consequently, the next level of machine learning and artificial intelligence is in explainable AI mostly driven due to legal aspects on re-traceability and transparency [8] – mainly in application areas which affects human life (e.g. agriculture, climate, forestry, health, … ).

A final note to my students: computational intelligence (you may call it either Artificial Intelligence (AI) or Machine Learning (ML) may help to solve problems, particularly in areas where humans have limited capacities (e.g. in high dimensional spaces, large numbers, big data, etc.); however, we must acknowledge that the problem-solving capacity of the human mind is still unbeaten in certain aspects (e.g. in the lower dimensions, little data, complex problems, etc.). A strategic aim to find solutions for data intensive problems is effectively the combination of our two areas: Human–Computer Interaction (HCI) and Knowledge Discovery (KDD).

A proverb attributed perhaps incorrectly to Albert Einstein (many proverbs are attributed to famous persons to make them appealing) illustrates this perfectly: “Computers are incredibly fast, accurate, but stupid. Humans are incredibly slow, inaccurate, but brilliant. Together they may be powerful beyond imagination”. Consequently, the novel approach to combine HCI & KDD in order to enhance human intelligence by computational intelligence fits perfectly to AI and ML together [1].

References:

[1] Holzinger, A. 2013. Human–Computer Interaction and Knowledge Discovery (HCI-KDD): What is the benefit of bringing those two fields to work together? In: Cuzzocrea, Alfredo, Kittl, Christian, Simos, Dimitris E., Weippl, Edgar & Xu, Lida (eds.) Multidisciplinary Research and Practice for Information Systems, Springer Lecture Notes in Computer Science LNCS 8127. Heidelberg, Berlin, New York: Springer, pp. 319-328, doi:10.1007/978-3-642-40511-2_22.

[2] Goodfellow, I., Bengio, Y. & Courville, A. 2016. Deep Learning, Cambridge (MA), MIT Press.

[3] Mcculloch, W. S. & Pitts, W. 1943. A logical calculus of the ideas immanent in nervous activity. Bulletin of Mathematical Biology, 5, (4), 115-133, doi:10.1007/BF02459570.

[4] Singh, D., Merdivan, E., Psychoula, I., Kropf, J., Hanke, S., Geist, M. & Holzinger, A. 2017. Human Activity Recognition Using Recurrent Neural Networks. In: Holzinger, Andreas, Kieseberg, Peter, Tjoa, A. Min & Weippl, Edgar (eds.) Machine Learning and Knowledge Extraction: Lecture Notes in Computer Science LNCS 10410. Cham: Springer International Publishing, pp. 267-274, doi:10.1007/978-3-319-66808-6_18.

[5] Holzinger, A. 2017. Introduction to Machine Learning and Knowledge Extraction (MAKE). Machine Learning and Knowledge Extraction, 1, (1), 1-20, doi:10.3390/make1010001.

[6] Hinton, G. E. & Shallice, T. 1991. Lesioning an attractor network: Investigations of acquired dyslexia. Psychological review, 98, (1), 74.

[7] Holzinger, A., Plass, M., Holzinger, K., Crisan, G.C., Pintea, C.-M. & Palade, V. 2017. A glass-box interactive machine learning approach for solving NP-hard problems with the human-in-the-loop. arXiv:1708.01104.

[8] Stoeger, K., Schneeberger, D., Holzinger, A. (2021) Medical artificial intelligence: the European legal perspective, Communications of the ACM, Volume 64, Issue 11, November 2021, pp 34-36, https://doi.org/10.1145/3458652

Some recent related work:

[1] Andreas Holzinger, Peter Kieseberg, Edgar Weippl & A Min Tjoa 2018. Current Advances, Trends and Challenges of Machine Learning and Knowledge Extraction: From Machine Learning to Explainable AI. Springer Lecture Notes in Computer Science LNCS 11015. Cham: Springer, pp. 1-8, doi:10.1007/978-3-319-99740-7_1. [Preprint available here]